JD-to-Reality Calibration Guide

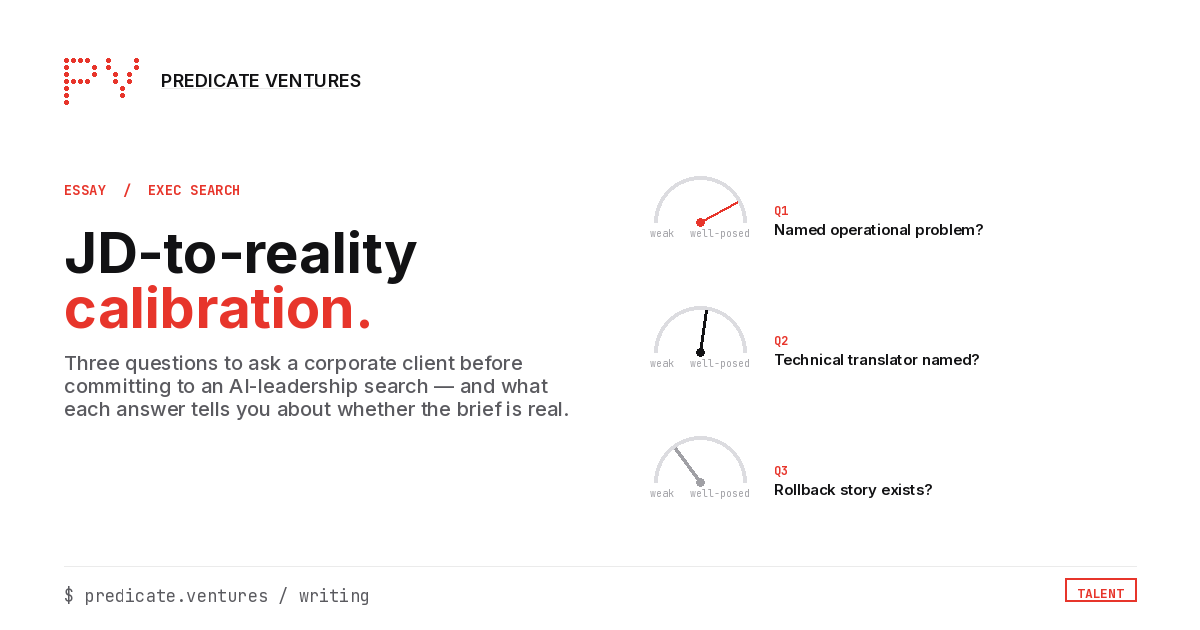

Three questions to ask a corporate client before committing to an AI-leadership search.

Blake Aber · Predicate Ventures · 2026

Most AI-leadership searches drag because the JD describes a person who doesn't exist. By the time the JD reaches a recruiter, it's been through HR after a VP-plus committee. The list reads "MBA + ML PhD + 15 years P&L + IPO experience" as table-stakes. The actual person who can do the job is someone who can stand up an AI platform without being told how. They often fail the first resume screen because they don't check all the boxes on a JD written by people who don't know what "good" looks like.

A sharp recruiter can re-shape that brief. The three questions below are the ones I keep landing on from the practitioner side. They're useful before committing to a search, useful in a kickoff with a corporate client, and useful as the diagnostic frame for whether the search will close in two iterations or six.

Question 1: What's the named operational problem this seat owns?

If there isn't one, the search is org-design work disguised as a hire.

The AI-leadership roles that stick past 18 months are scoped to a specific named problem: a fragmented data estate that's blocking a clinical workflow, a failed pilot that needs to be salvaged or shut down, a compliance-adjacent build that needs a senior owner before regulators show up. Roles without a named problem ("we want a strategic Head of AI") are org-design needs dressed as a hire. The right candidate for an org-design need is rarely the right candidate for a named-problem hire, and vice versa.

The diagnostic test for a corporate client: ask "what specifically gets fixed if this hire is great?" Does the answer come back in 30 seconds, or does it take a circle of internal conversations? If it takes the circle, the brief isn't ready. The hire will churn at month 14 to 18 because the role had no anchor.

Question 2: Who is the technical translator the AI-leader will rely on?

If that person isn't named, the seat will be lonely and churn.

The candidates who clear VP-of-AI searches aren't the tallest ML-PhD towers. They're translators who sit between a non-technical CEO and an engineering org and ship something that produces a measurable P&L line. But translators don't operate alone. They rely on a deep technical counterpart inside engineering: a Head of Platform, a Principal Engineer, a Director of ML Infrastructure. Someone who can take the spec the AI-leader writes and operationalize it.

If the corporate client can name that technical counterpart in the kickoff call, the search is well-posed. If they can't, and the org chart shows "Head of AI" reporting to the CEO with no technical depth underneath, the search is for a person who will spend the first six months hiring their own counterpart instead of shipping. That's an 18-month role with a 9-month visible runway.

The diagnostic test: "Who is the strongest technical voice in the room when AI engineering decisions get made today?" If the answer is a name, the search has a partner. If the answer is a vendor or "we're building that team," the search is two hires deep, not one.

Question 3: What's the rollback story if the first hire isn't right?

If there isn't one, the org isn't ready to make this hire yet.

AI-leadership hires fail. Not catastrophically. Quietly, at the 14-to-18-month mark, because the org-design problem from Question 1 wasn't solvable by the hire and the technical-translator partner from Question 2 didn't exist. Mature organizations plan for this before making the hire: who's the interim if the role doesn't fill, what's the public framing if the hire exits, what does the bar look like for the second search, who covers the work in the gap.

If a corporate client hasn't thought about rollback, two things follow. First, they will under-equip the first hire. They didn't budget for failure, so they didn't budget for the support structures that prevent it. Second, when the first hire churns, the second search runs at lower velocity because the org has now learned the lesson the hard way and the candidate pool has read the news.

The diagnostic test: "If this hire isn't right twelve months in, what's your move?" If the client engages the question seriously, they're operating at scale. If the question reads as off-frame ("we're going to make a great hire"), the brief is one hire short of what they think it is.

How to use these in practice

The three questions don't need to be asked verbatim. They're a frame for the kickoff call. If a corporate client volunteers the named problem, names the technical translator, and engages the rollback question without prompting, the search is well-posed and will likely close in one iteration. If the client has zero of the three, the brief is org-design work. Offering to facilitate a half-day on org-shape before the search begins is often higher-value than running the search as briefed.

Most senior recruiters know all three patterns intuitively. The questions exist as a tool: something to bring to the client conversation that turns operator pattern-recognition into client-side diagnostic. Useful when the recruiter wants to push back on a brief that's clearly underspecified without sounding like they're refusing the work.